Abstract

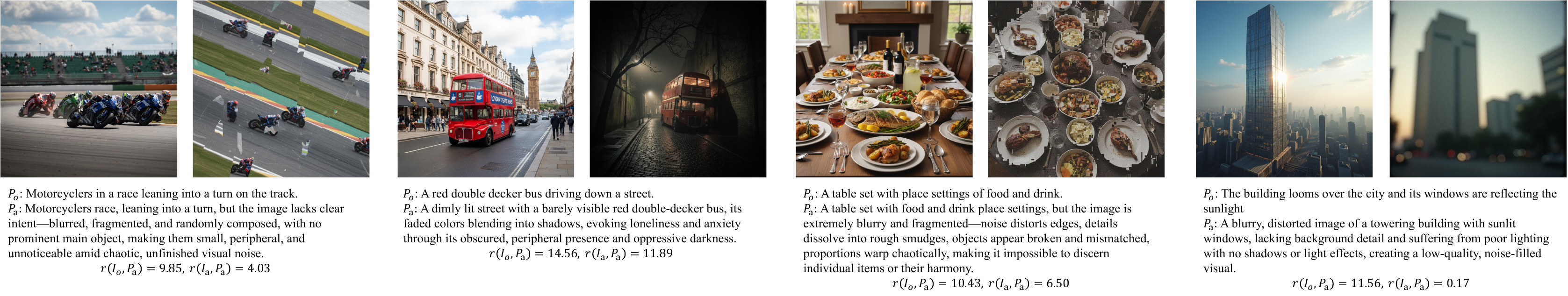

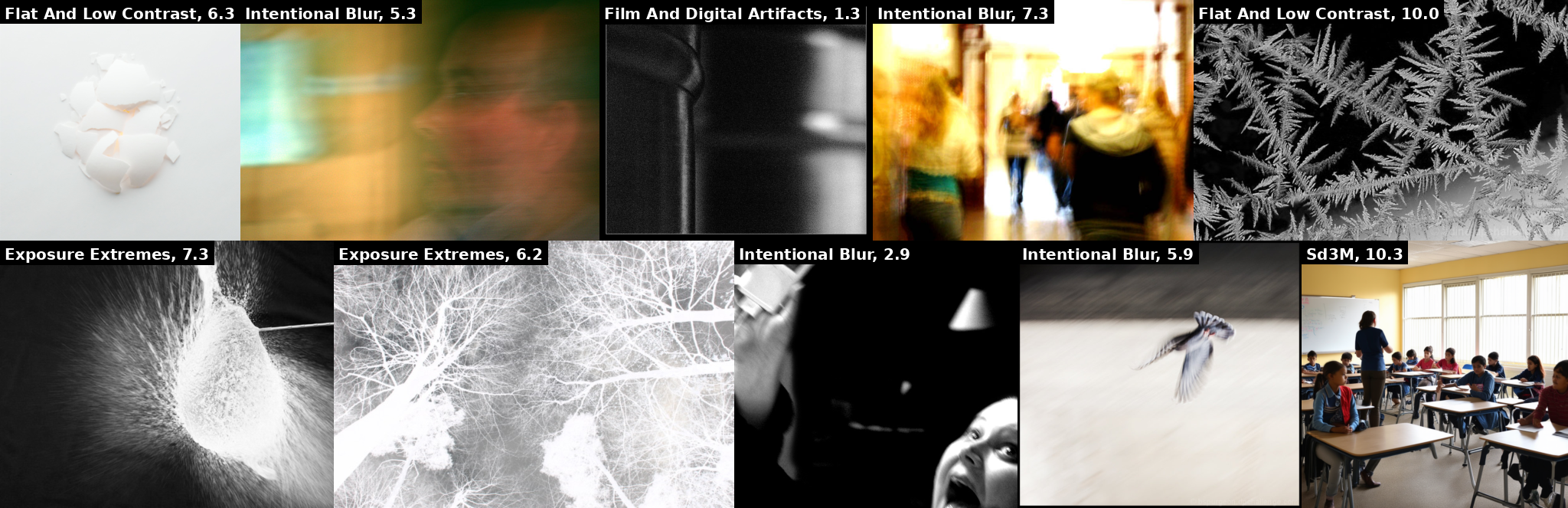

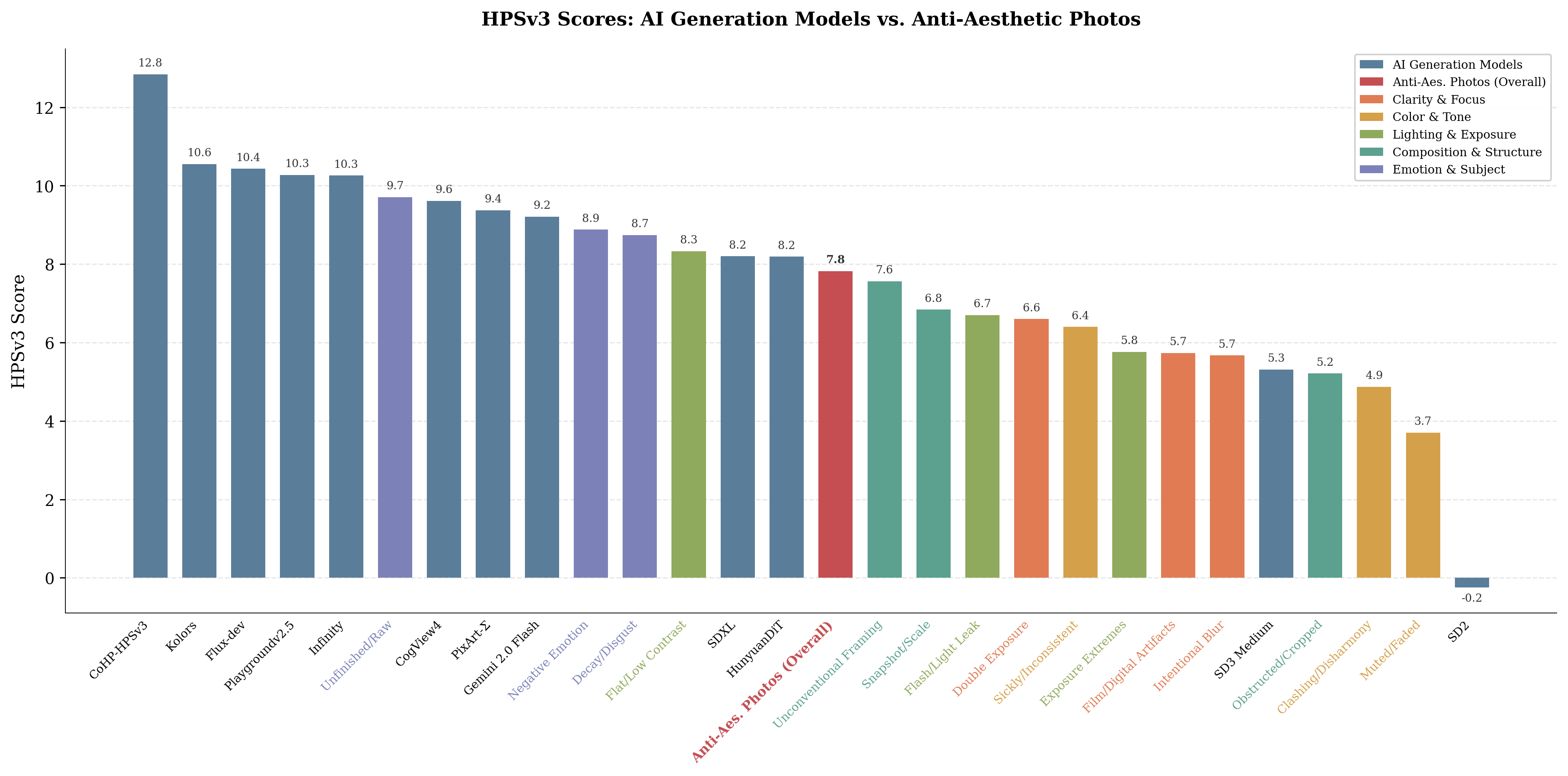

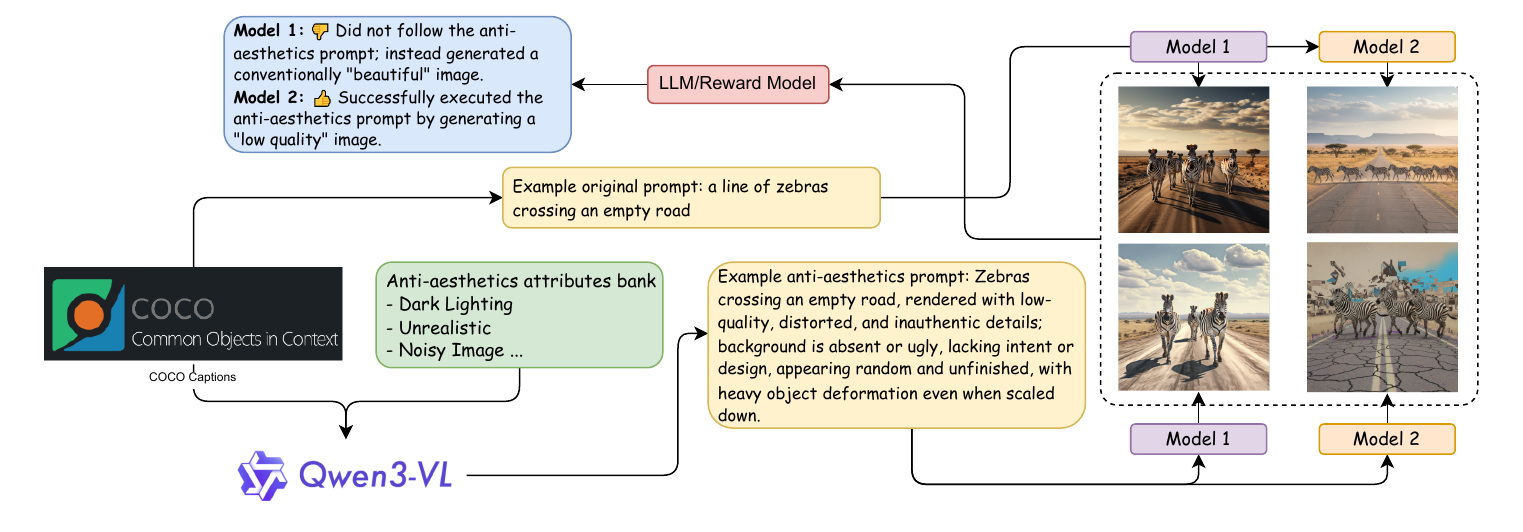

Over-aligning image generation models to a generalized aesthetic preference conflicts with user intent, particularly when anti-aesthetic outputs are requested for artistic or critical purposes. This position paper argues that aesthetic alignment can prioritize developer-centered values over user autonomy and aesthetic pluralism.